Flair NLP and flaiR for Social Science

Flair NLP is an open-source Natural Language Processing (NLP) library developed by Zalando Research. Known for its state-of-the-art solutions, it excels in contextual string embeddings, Named Entity Recognition (NER), and Part-of-Speech tagging (POS). Flair offers robust text analysis tools through multiple embedding approaches, including Flair contextual string embeddings, transformer-based embeddings from Hugging Face, and traditional models like GloVe and fasttext. Additionally, it provides pre-trained models for various languages and seamless integration with fine-tuned transformers hosted on Hugging Face.

flaiR bridges these powerful NLP features from Python to the R environment, making advanced text analysis accessible for social science researcher by combining Flair’s ease of use with R’s familiar interface for integration with popular R packages such as quanteda and more.

Sentence and Token

Sentence and Token are fundamental classes.

Sentence

A Sentence in Flair is an object that contains a sequence of Token objects, and it can be annotated with labels, such as named entities, part-of-speech tags, and more. It also can store embeddings for the sentence as a whole and different kinds of linguistic annotations.

Here’s a simple example of how you create a Sentence:

# Creating a Sentence object

library(flaiR)

string <- "What I see in UCD today, what I have seen of UCD in its impact on my own life and the life of Ireland."

Sentence <- flair_data()$Sentence

sentence <- Sentence(string)Sentence[26] means that there are a total of 26 tokens

in the sentence.

print(sentence)

#> Sentence[26]: "What I see in UCD today, what I have seen of UCD in its impact on my own life and the life of Ireland."Token

When you use Flair to handle text data,1 Sentence

and Token objects often play central roles in many use

cases. When you create a Sentence object, it automatically tokenizes the

text, removing the need to create the Token object manually.

Unlike R, which indexes from 1, Python indexes from 0. Therefore,

when using a for loop, I use seq_along(sentence) - 1. The

output should be something like:

# The Sentence object has automatically created and contains multiple Token objects

# We can iterate through the Sentence object to view each Token

for (i in seq_along(sentence)-1) {

print(sentence[[i]])

}

#> Token[0]: "What"

#> Token[1]: "I"

#> Token[2]: "see"

#> Token[3]: "in"

#> Token[4]: "UCD"

#> Token[5]: "today"

#> Token[6]: ","

#> Token[7]: "what"

#> Token[8]: "I"

#> Token[9]: "have"

#> Token[10]: "seen"

#> Token[11]: "of"

#> Token[12]: "UCD"

#> Token[13]: "in"

#> Token[14]: "its"

#> Token[15]: "impact"

#> Token[16]: "on"

#> Token[17]: "my"

#> Token[18]: "own"

#> Token[19]: "life"

#> Token[20]: "and"

#> Token[21]: "the"

#> Token[22]: "life"

#> Token[23]: "of"

#> Token[24]: "Ireland"

#> Token[25]: "."Or you can directly use $tokens method to print all

tokens.

print(sentence$tokens)

#> [[1]]

#> Token[0]: "What"

#>

#> [[2]]

#> Token[1]: "I"

#>

#> [[3]]

#> Token[2]: "see"

#>

#> [[4]]

#> Token[3]: "in"

#>

#> [[5]]

#> Token[4]: "UCD"

#>

#> [[6]]

#> Token[5]: "today"

#>

#> [[7]]

#> Token[6]: ","

#>

#> [[8]]

#> Token[7]: "what"

#>

#> [[9]]

#> Token[8]: "I"

#>

#> [[10]]

#> Token[9]: "have"

#>

#> [[11]]

#> Token[10]: "seen"

#>

#> [[12]]

#> Token[11]: "of"

#>

#> [[13]]

#> Token[12]: "UCD"

#>

#> [[14]]

#> Token[13]: "in"

#>

#> [[15]]

#> Token[14]: "its"

#>

#> [[16]]

#> Token[15]: "impact"

#>

#> [[17]]

#> Token[16]: "on"

#>

#> [[18]]

#> Token[17]: "my"

#>

#> [[19]]

#> Token[18]: "own"

#>

#> [[20]]

#> Token[19]: "life"

#>

#> [[21]]

#> Token[20]: "and"

#>

#> [[22]]

#> Token[21]: "the"

#>

#> [[23]]

#> Token[22]: "life"

#>

#> [[24]]

#> Token[23]: "of"

#>

#> [[25]]

#> Token[24]: "Ireland"

#>

#> [[26]]

#> Token[25]: "."Retrieve the Token

To comprehend the string representation format of the Sentence

object, tagging at least one token is adequate. Python’s

get_token(n) method allows us to retrieve the Token object

for a particular token. Additionally, we can use

[] to index a specific token.

# method in Python

sentence$get_token(5)

#> Token[4]: "UCD"

# indexing in R

sentence[6]

#> Token[6]: ","Each word (and punctuation) in the text is treated as an individual Token object. These Token objects store text information and other possible linguistic information (such as part-of-speech tags or named entity tags) and embedding (if you used a model to generate them).

While you do not need to create Token objects manually, understanding how to manage them is useful in situations where you might want to fine-tune the tokenization process. For example, you can control the exactness of tokenization by manually creating Token objects from a Sentence object.

This makes Flair very flexible when handling text data since the automatic tokenization feature can be used for rapid development, while also allowing users to fine-tune their tokenization.

Annotate POS tag and NER tag

The add_label(label_type, value) method can be employed

to assign a label to the token. In Universal POS tags, if

sentence[10] is ‘see’, ‘seen’ might be tagged as

VERB, indicating it is a past participle form of a

verb.

sentence[10]$add_label('manual-pos', 'VERB')

print(sentence[10])

#> Token[10]: "seen" → VERB (1.0000)We can also add a NER (Named Entity Recognition) tag to

sentence[4], “UCD”, identifying it as a university in

Dublin.

sentence[4]$add_label('ner', 'ORG')

print(sentence[4])

#> Token[4]: "UCD" → ORG (1.0000)If we print the sentence object, Sentence[50] provides

information for 50 tokens → [‘in’/ORG, ‘seen’/VERB], thus displaying two

tagging pieces of information.

print(sentence)

#> Sentence[26]: "What I see in UCD today, what I have seen of UCD in its impact on my own life and the life of Ireland." → ["UCD"/ORG, "seen"/VERB]Corpus

The Corpus object in Flair is a fundamental data structure that represents a dataset containing text samples, usually comprising of a training set, a development set (or validation set), and a test set. It’s designed to work smoothly with Flair’s models for tasks like named entity recognition, text classification, and more.

Attributes:

-

train: A list of sentences (ListSentence) that form the training dataset. -

dev(or development): A list of sentences (ListSentence) that form the development (or validation) dataset. -

test: A list of sentences (ListSentence) that form the test dataset.

Important Methods:

-

downsample: This method allows you to downsample (reduce) the number of sentences in the train, dev, and test splits. -

obtain_statistics: This method gives a quick overview of the statistics of the corpus, including the number of sentences and the distribution of labels. -

make_vocab_dictionary: Used to create a vocabulary dictionary from the corpus.

library(flaiR)

Corpus <- flair_data()$Corpus

Sentence <- flair_data()$Sentence

# Create some example sentences

train <- list(Sentence('This is a training example.'))

dev <- list(Sentence('This is a validation example.'))

test <- list(Sentence('This is a test example.'))

# Create a corpus using the custom data splits

corp <- Corpus(train = train, dev = dev, test = test)$obtain_statistics() method of the Corpus object in the

Flair library provides an overview of the dataset statistics. The method

returns a Python

dictionary with details about the training, validation

(development), and test datasets that make up the corpus. In R, you can

use the jsonlite package to format JSON.

library(jsonlite)

data <- fromJSON(corp$obtain_statistics())

formatted_str <- toJSON(data, pretty=TRUE)

print(formatted_str)

#> {

#> "TRAIN": {

#> "dataset": ["TRAIN"],

#> "total_number_of_documents": [1],

#> "number_of_documents_per_class": {},

#> "number_of_tokens_per_tag": {},

#> "number_of_tokens": {

#> "total": [6],

#> "min": [6],

#> "max": [6],

#> "avg": [6]

#> }

#> },

#> "TEST": {

#> "dataset": ["TEST"],

#> "total_number_of_documents": [1],

#> "number_of_documents_per_class": {},

#> "number_of_tokens_per_tag": {},

#> "number_of_tokens": {

#> "total": [6],

#> "min": [6],

#> "max": [6],

#> "avg": [6]

#> }

#> },

#> "DEV": {

#> "dataset": ["DEV"],

#> "total_number_of_documents": [1],

#> "number_of_documents_per_class": {},

#> "number_of_tokens_per_tag": {},

#> "number_of_tokens": {

#> "total": [6],

#> "min": [6],

#> "max": [6],

#> "avg": [6]

#> }

#> }

#> }In R

Below, we use data from the article The Temporal Focus of Campaign Communication by Stefan Muller, published in the Journal of Politics in 2020, as an example.

First, we vectorize the cc_muller$text using the

Sentence function to transform it into a list object. Then, we reformat

cc_muller$class_pro_retro as a factor. It’s essential to

note that R handles numerical values differently than Python. In R,

numerical values are represented with a floating point, so it’s

advisable to convert them into factors or strings. Lastly, we employ the

map function from the purrr package to assign labels to each sentence

corpus using the $add_label method.

library(purrr)

#>

#> Attaching package: 'purrr'

#> The following object is masked from 'package:jsonlite':

#>

#> flatten

data(cc_muller)

# The `Sentence` object tokenizes text

text <- lapply( cc_muller$text, Sentence)

# split sentence object to train and test.

labels <- as.factor(cc_muller$class_pro_retro)

# `$add_label` method assigns the corresponding coded type to each Sentence corpus.

text <- map2(text, labels, ~ .x$add_label("classification", .y), .progress = TRUE)To perform a train-test split using base R, we can follow these steps:

set.seed(2046)

sample <- sample(c(TRUE, FALSE), length(text), replace=TRUE, prob=c(0.8, 0.2))

train <- text[sample]

test <- text[!sample]

sprintf("Corpus object sizes - Train: %d | Test: %d", length(train), length(test))

#> [1] "Corpus object sizes - Train: 4710 | Test: 1148"If you don’t provide a dev set, flaiR will not force you to carve out a portion of your test set to serve as a dev set. However, in some cases when only the train and test sets are provided without a dev set, flaiR might automatically take a fraction of the train set (e.g., 10%) to use as a dev set (#2259). This is to offer a mechanism for model selection and to prevent the model from overfitting on the train set.

In the “Corpus” function, there is a random selection of the

"dev" dataset. To ensure reproducibility, we need to set

the seed in the flaiR framework. We can accomplish this by calling the

top-level module “flair” from flaiR and using

$set_seed(1964L) to set the seed.

flair <- import_flair()

flair$set_seed(1964L)

corp <- Corpus(train=train,

# dev=test,

test=test)

#> 2025-10-29 01:21:51,089 No dev split found. Using 10% (i.e. 471 samples) of the train split as dev data

sprintf("Corpus object sizes - Train: %d | Test: %d | Dev: %d",

length(corp$train),

length(corp$test),

length(corp$dev))

#> [1] "Corpus object sizes - Train: 4239 | Test: 1148 | Dev: 471"In the later sections, there will be more similar processing using

the Corpus. Following that, we will focus on advanced NLP

applications.

Sequence Taggings

Tag Entities in Text

Let’s run named entity recognition over the following example sentence: “I love Berlin and New York”. To do this, all you need to do is make a Sentence object for this text, load a pre-trained model and use it to predict tags for the object.

NER Models

| ID | Task | Language | Training Dataset | Accuracy | Contributor / Notes |

|---|---|---|---|---|---|

| ‘ner’ | NER (4-class) | English | Conll-03 | 93.03 (F1) | |

| ‘ner-fast’ | NER (4-class) | English | Conll-03 | 92.75 (F1) | (fast model) |

| ‘ner-large’ | NER (4-class) | English / Multilingual | Conll-03 | 94.09 (F1) | (large model) |

| ‘ner-pooled’ | NER (4-class) | English | Conll-03 | 93.24 (F1) | (memory inefficient) |

| ‘ner-ontonotes’ | NER (18-class) | English | Ontonotes | 89.06 (F1) | |

| ‘ner-ontonotes-fast’ | NER (18-class) | English | Ontonotes | 89.27 (F1) | (fast model) |

| ‘ner-ontonotes-large’ | NER (18-class) | English / Multilingual | Ontonotes | 90.93 (F1) | (large model) |

| ‘ar-ner’ | NER (4-class) | Arabic | AQMAR & ANERcorp (curated) | 86.66 (F1) | |

| ‘da-ner’ | NER (4-class) | Danish | Danish NER dataset | AmaliePauli | |

| ‘de-ner’ | NER (4-class) | German | Conll-03 | 87.94 (F1) | |

| ‘de-ner-large’ | NER (4-class) | German / Multilingual | Conll-03 | 92.31 (F1) | |

| ‘de-ner-germeval’ | NER (4-class) | German | Germeval | 84.90 (F1) | |

| ‘de-ner-legal’ | NER (legal text) | German | LER dataset | 96.35 (F1) | |

| ‘fr-ner’ | NER (4-class) | French | WikiNER (aij-wikiner-fr-wp3) | 95.57 (F1) | mhham |

| ‘es-ner-large’ | NER (4-class) | Spanish | CoNLL-03 | 90.54 (F1) | mhham |

| ‘nl-ner’ | NER (4-class) | Dutch | CoNLL 2002 | 92.58 (F1) | |

| ‘nl-ner-large’ | NER (4-class) | Dutch | Conll-03 | 95.25 (F1) | |

| ‘nl-ner-rnn’ | NER (4-class) | Dutch | CoNLL 2002 | 90.79 (F1) | |

| ‘ner-ukrainian’ | NER (4-class) | Ukrainian | NER-UK dataset | 86.05 (F1) | dchaplinsky |

Source: https://flairnlp.github.io/docs/tutorial-basics/tagging-entities

POS Models

| ID | Task | Language | Training Dataset | Accuracy | Contributor / Notes |

|---|---|---|---|---|---|

| ‘pos’ | POS-tagging | English | Ontonotes | 98.19 (Accuracy) | |

| ‘pos-fast’ | POS-tagging | English | Ontonotes | 98.1 (Accuracy) | (fast model) |

| ‘upos’ | POS-tagging (universal) | English | Ontonotes | 98.6 (Accuracy) | |

| ‘upos-fast’ | POS-tagging (universal) | English | Ontonotes | 98.47 (Accuracy) | (fast model) |

| ‘pos-multi’ | POS-tagging | Multilingual | UD Treebanks | 96.41 (average acc.) | (12 languages) |

| ‘pos-multi-fast’ | POS-tagging | Multilingual | UD Treebanks | 92.88 (average acc.) | (12 languages) |

| ‘ar-pos’ | POS-tagging | Arabic (+dialects) | combination of corpora | ||

| ‘de-pos’ | POS-tagging | German | UD German - HDT | 98.50 (Accuracy) | |

| ‘de-pos-tweets’ | POS-tagging | German | German Tweets | 93.06 (Accuracy) | stefan-it |

| ‘da-pos’ | POS-tagging | Danish | Danish Dependency Treebank | AmaliePauli | |

| ‘ml-pos’ | POS-tagging | Malayalam | 30000 Malayalam sentences | 83 | sabiqueqb |

| ‘ml-upos’ | POS-tagging | Malayalam | 30000 Malayalam sentences | 87 | sabiqueqb |

| ‘pt-pos-clinical’ | POS-tagging | Portuguese | PUCPR | 92.39 | LucasFerroHAILab for clinical texts |

| ‘pos-ukrainian’ | POS-tagging | Ukrainian | Ukrainian UD | 97.93 (F1) | dchaplinsky |

Source: https://flairnlp.github.io/docs/tutorial-basics/part-of-speech-tagging

# attach flaiR in R

library(flaiR)

# make a sentence

Sentence <- flair_data()$Sentence

sentence <- Sentence('I love Berlin and New York.')

# load the NER tagger

SequenceTagger <- flair_models()$SequenceTagger

tagger <- SequenceTagger$load('flair/ner-english')

#> 2025-10-29 01:21:51,628 SequenceTagger predicts: Dictionary with 20 tags: <unk>, O, S-ORG, S-MISC, B-PER, E-PER, S-LOC, B-ORG, E-ORG, I-PER, S-PER, B-MISC, I-MISC, E-MISC, I-ORG, B-LOC, E-LOC, I-LOC, <START>, <STOP>

# run NER over sentence

tagger$predict(sentence)To print all annotations:

# print the sentence with all annotations

print(sentence)

#> Sentence[7]: "I love Berlin and New York." → ["Berlin"/LOC, "New York"/LOC]Use a for loop to print out each POS tag. It’s important to note that

Python is indexed from 0. Therefore, in an R environment, we must use

seq_along(sentence$get_labels()) - 1.

Tag Part-of-Speech

We use flaiR/POS-english for POS tagging in the standard

models on Hugging Face.

# attach flaiR in R

library(flaiR)

# make a sentence

Sentence <- flair_data()$Sentence

sentence <- Sentence('I love Berlin and New York.')

# load the NER tagger

Classifier <- flair_nn()$Classifier

tagger <- Classifier$load('pos')

#> 2025-10-29 01:21:52,660 SequenceTagger predicts: Dictionary with 53 tags: <unk>, O, UH, ,, VBD, PRP, VB, PRP$, NN, RB, ., DT, JJ, VBP, VBG, IN, CD, NNS, NNP, WRB, VBZ, WDT, CC, TO, MD, VBN, WP, :, RP, EX, JJR, FW, XX, HYPH, POS, RBR, JJS, PDT, NNPS, RBS, AFX, WP$, -LRB-, -RRB-, ``, '', LS, $, SYM, ADDPenn Treebank POS Tags Reference

| Tag | Description | Example |

|---|---|---|

| DT | Determiner | the, a, these |

| NN | Noun, singular | cat, tree |

| NNS | Noun, plural | cats, trees |

| NNP | Proper noun, singular | John, London |

| NNPS | Proper noun, plural | Americans |

| VB | Verb, base form | take |

| VBD | Verb, past tense | took |

| VBG | Verb, gerund/present participle | taking |

| VBN | Verb, past participle | taken |

| VBP | Verb, non-3rd person singular present | take |

| VBZ | Verb, 3rd person singular present | takes |

| JJ | Adjective | big |

| RB | Adverb | quickly |

| O | Other | - |

| , | Comma | , |

| . | Period | . |

| : | Colon | : |

| -LRB- | Left bracket | ( |

| -RRB- | Right bracket | ) |

| `` | Opening quotation | ” |

| ’’ | Closing quotation | ” |

| HYPH | Hyphen | - |

| CD | Cardinal number | 1, 2, 3 |

| IN | Preposition | in, on, at |

| PRP | Personal pronoun | I, you, he |

| PRP$ | Possessive pronoun | my, your |

| UH | Interjection | oh, wow |

| FW | Foreign word | café |

| SYM | Symbol | +, % |

# run NER over sentence

tagger$predict(sentence)

# print the sentence with all annotations

print(sentence)

#> Sentence[7]: "I love Berlin and New York." → ["I"/PRP, "love"/VBP, "Berlin"/NNP, "and"/CC, "New"/NNP, "York"/NNP, "."/.]Use a for loop to print out each POS tag.

for (i in seq_along(sentence$get_labels())) {

print(sentence$get_labels()[[i]])

}

#> 'Token[0]: "I"'/'PRP' (1.0)

#> 'Token[1]: "love"'/'VBP' (1.0)

#> 'Token[2]: "Berlin"'/'NNP' (0.9999)

#> 'Token[3]: "and"'/'CC' (1.0)

#> 'Token[4]: "New"'/'NNP' (1.0)

#> 'Token[5]: "York"'/'NNP' (1.0)

#> 'Token[6]: "."'/'.' (1.0)Detect Sentiment

Let’s run sentiment analysis over the same sentence to determine whether it is POSITIVE or NEGATIVE.

You can do this with essentially the same code as above. Instead of

loading the ‘ner’ model, you now load the 'sentiment'

model:

# attach flaiR in R

library(flaiR)

# make a sentence

Sentence <- flair_data()$Sentence

sentence <- Sentence('I love Berlin and New York.')

# load the Classifier tagger from flair.nn module

Classifier <- flair_nn()$Classifier

tagger <- Classifier$load('sentiment')

# run sentiment analysis over sentence

tagger$predict(sentence)

# print the sentence with all annotations

print(sentence)

#> Sentence[7]: "I love Berlin and New York." → POSITIVE (0.9982)Dealing with Dataframe

Parts-of-Speech Tagging Across Full DataFrame

You can apply Part-of-Speech (POS) tagging across an entire DataFrame using Flair’s pre-trained models. Let’s walk through an example using the pos-fast model. You can apply Part-of-Speech (POS) tagging across an entire DataFrame using Flair’s pre-trained models. Let’s walk through an example using the pos-fast model. First, let’s load our required packages and sample data:

For POS tagging, we’ll use Flair’s pre-trained model. The pos-fast model offers a good balance between speed and accuracy. For more pre-trained models, check out Flair’s documentation at Flair POS Tagging Documentation. There are two ways to load the POS tagger:

- Load with tag dictionary display (default):

tagger_pos <- load_tagger_pos("pos-fast")

#> Loading POS tagger model: pos-fast

#> 2025-10-29 01:21:55,936 SequenceTagger predicts: Dictionary with 53 tags: <unk>, O, UH, ,, VBD, PRP, VB, PRP$, NN, RB, ., DT, JJ, VBP, VBG, IN, CD, NNS, NNP, WRB, VBZ, WDT, CC, TO, MD, VBN, WP, :, RP, EX, JJR, FW, XX, HYPH, POS, RBR, JJS, PDT, NNPS, RBS, AFX, WP$, -LRB-, -RRB-, ``, '', LS, $, SYM, ADD

#>

#> POS Tagger Dictionary:

#> ========================================

#> Total tags: 53

#> ----------------------------------------

#> Special: <unk>, O, <START>, <STOP>

#> Nouns: PRP, PRP$, NN, NNS, NNP, WP, EX, NNPS, WP$

#> Verbs: VBD, VB, VBP, VBG, VBZ, MD, VBN

#> Adjectives: JJ, JJR, POS, JJS

#> Adverbs: RB, WRB, RBR, RBS

#> Determiners: DT, WDT, PDT

#> Prepositions: IN, TO

#> Conjunctions: CC

#> Numbers: CD

#> Punctuation: <unk>, ,, ., :, HYPH, -LRB-, -RRB-, ``, '', $, NFP, <START>, <STOP>

#> Others: UH, FW, XX, LS, $, SYM, ADD

#> ----------------------------------------

#> Common POS Tag Meanings:

#> NN*: Nouns (NNP: Proper, NNS: Plural)

#> VB*: Verbs (VBD: Past, VBG: Gerund)

#> JJ*: Adjectives (JJR: Comparative)

#> RB*: Adverbs

#> PRP: Pronouns, DT: Determiners

#> IN: Prepositions, CC: Conjunctions

#> ========================================This will show you all available POS tags grouped by categories (nouns, verbs, adjectives, etc.).

- Load without tag display for a cleaner output:

pos_tagger <- load_tagger_pos("pos-fast", show_tags = FALSE)

#> Loading POS tagger model: pos-fast

#> 2025-10-29 01:21:56,318 SequenceTagger predicts: Dictionary with 53 tags: <unk>, O, UH, ,, VBD, PRP, VB, PRP$, NN, RB, ., DT, JJ, VBP, VBG, IN, CD, NNS, NNP, WRB, VBZ, WDT, CC, TO, MD, VBN, WP, :, RP, EX, JJR, FW, XX, HYPH, POS, RBR, JJS, PDT, NNPS, RBS, AFX, WP$, -LRB-, -RRB-, ``, '', LS, $, SYM, ADDNow we can process our texts:

results <- get_pos(texts = uk_immigration$text,

doc_ids = uk_immigration$speaker,

show.text_id = TRUE,

tagger = pos_tagger)

head(results, n = 10)

#> doc_id token_id

#> <char> <num>

#> 1: Philip Hollobone 0

#> 2: Philip Hollobone 1

#> 3: Philip Hollobone 2

#> 4: Philip Hollobone 3

#> 5: Philip Hollobone 4

#> 6: Philip Hollobone 5

#> 7: Philip Hollobone 6

#> 8: Philip Hollobone 7

#> 9: Philip Hollobone 8

#> 10: Philip Hollobone 9

#> text_id

#> <char>

#> 1: I thank Mr. Speaker for giving me permission to hold this debate today. I welcome the Minister-I very much appreciate the contact from his office prior to today-and the Conservative and Liberal Democrat Front Benchers to the debate. I also welcome my hon. Friends on the Back Benches. Immigration is the most important issue for my constituents. I get more complaints, comments and suggestions about immigration than about anything else. In the Kettering constituency, the number of immigrants is actually very low. There is a well-settled Sikh community in the middle of Kettering town itself, which has been in Kettering for some 40 or 50 years and is very much part of the local community and of the fabric of local life. There are other very small migrant groups in my constituency, but it is predominantly made up of indigenous British people. However, there is huge concern among my constituents about the level of immigration into our country. I believe that I am right in saying that, in recent years, net immigration into the United Kingdom is the largest wave of immigration that our country has ever known and, proportionately, is probably the biggest wave of immigration since the Norman conquest. My contention is that our country simply cannot cope with immigration on that scale-to coin a phrase, we simply cannot go on like this. It is about time that mainstream politicians started airing the views of their constituents, because for too long people have muttered under their breath that they are concerned about immigration. They have been frightened to speak out about it because they are frightened of being accused of being racist. My contention is that immigration is not a racist issue; it is a question of numbers. I personally could not care tuppence about the ethnicity of the immigrants concerned, the colour of their skin or the language that they speak. What I am concerned about is the very large numbers of new arrivals to our country. My contention is that the United Kingdom simply cannot cope with them.

#> 2: I thank Mr. Speaker for giving me permission to hold this debate today. I welcome the Minister-I very much appreciate the contact from his office prior to today-and the Conservative and Liberal Democrat Front Benchers to the debate. I also welcome my hon. Friends on the Back Benches. Immigration is the most important issue for my constituents. I get more complaints, comments and suggestions about immigration than about anything else. In the Kettering constituency, the number of immigrants is actually very low. There is a well-settled Sikh community in the middle of Kettering town itself, which has been in Kettering for some 40 or 50 years and is very much part of the local community and of the fabric of local life. There are other very small migrant groups in my constituency, but it is predominantly made up of indigenous British people. However, there is huge concern among my constituents about the level of immigration into our country. I believe that I am right in saying that, in recent years, net immigration into the United Kingdom is the largest wave of immigration that our country has ever known and, proportionately, is probably the biggest wave of immigration since the Norman conquest. My contention is that our country simply cannot cope with immigration on that scale-to coin a phrase, we simply cannot go on like this. It is about time that mainstream politicians started airing the views of their constituents, because for too long people have muttered under their breath that they are concerned about immigration. They have been frightened to speak out about it because they are frightened of being accused of being racist. My contention is that immigration is not a racist issue; it is a question of numbers. I personally could not care tuppence about the ethnicity of the immigrants concerned, the colour of their skin or the language that they speak. What I am concerned about is the very large numbers of new arrivals to our country. My contention is that the United Kingdom simply cannot cope with them.

#> 3: I thank Mr. Speaker for giving me permission to hold this debate today. I welcome the Minister-I very much appreciate the contact from his office prior to today-and the Conservative and Liberal Democrat Front Benchers to the debate. I also welcome my hon. Friends on the Back Benches. Immigration is the most important issue for my constituents. I get more complaints, comments and suggestions about immigration than about anything else. In the Kettering constituency, the number of immigrants is actually very low. There is a well-settled Sikh community in the middle of Kettering town itself, which has been in Kettering for some 40 or 50 years and is very much part of the local community and of the fabric of local life. There are other very small migrant groups in my constituency, but it is predominantly made up of indigenous British people. However, there is huge concern among my constituents about the level of immigration into our country. I believe that I am right in saying that, in recent years, net immigration into the United Kingdom is the largest wave of immigration that our country has ever known and, proportionately, is probably the biggest wave of immigration since the Norman conquest. My contention is that our country simply cannot cope with immigration on that scale-to coin a phrase, we simply cannot go on like this. It is about time that mainstream politicians started airing the views of their constituents, because for too long people have muttered under their breath that they are concerned about immigration. They have been frightened to speak out about it because they are frightened of being accused of being racist. My contention is that immigration is not a racist issue; it is a question of numbers. I personally could not care tuppence about the ethnicity of the immigrants concerned, the colour of their skin or the language that they speak. What I am concerned about is the very large numbers of new arrivals to our country. My contention is that the United Kingdom simply cannot cope with them.

#> 4: I thank Mr. Speaker for giving me permission to hold this debate today. I welcome the Minister-I very much appreciate the contact from his office prior to today-and the Conservative and Liberal Democrat Front Benchers to the debate. I also welcome my hon. Friends on the Back Benches. Immigration is the most important issue for my constituents. I get more complaints, comments and suggestions about immigration than about anything else. In the Kettering constituency, the number of immigrants is actually very low. There is a well-settled Sikh community in the middle of Kettering town itself, which has been in Kettering for some 40 or 50 years and is very much part of the local community and of the fabric of local life. There are other very small migrant groups in my constituency, but it is predominantly made up of indigenous British people. However, there is huge concern among my constituents about the level of immigration into our country. I believe that I am right in saying that, in recent years, net immigration into the United Kingdom is the largest wave of immigration that our country has ever known and, proportionately, is probably the biggest wave of immigration since the Norman conquest. My contention is that our country simply cannot cope with immigration on that scale-to coin a phrase, we simply cannot go on like this. It is about time that mainstream politicians started airing the views of their constituents, because for too long people have muttered under their breath that they are concerned about immigration. They have been frightened to speak out about it because they are frightened of being accused of being racist. My contention is that immigration is not a racist issue; it is a question of numbers. I personally could not care tuppence about the ethnicity of the immigrants concerned, the colour of their skin or the language that they speak. What I am concerned about is the very large numbers of new arrivals to our country. My contention is that the United Kingdom simply cannot cope with them.

#> 5: I thank Mr. Speaker for giving me permission to hold this debate today. I welcome the Minister-I very much appreciate the contact from his office prior to today-and the Conservative and Liberal Democrat Front Benchers to the debate. I also welcome my hon. Friends on the Back Benches. Immigration is the most important issue for my constituents. I get more complaints, comments and suggestions about immigration than about anything else. In the Kettering constituency, the number of immigrants is actually very low. There is a well-settled Sikh community in the middle of Kettering town itself, which has been in Kettering for some 40 or 50 years and is very much part of the local community and of the fabric of local life. There are other very small migrant groups in my constituency, but it is predominantly made up of indigenous British people. However, there is huge concern among my constituents about the level of immigration into our country. I believe that I am right in saying that, in recent years, net immigration into the United Kingdom is the largest wave of immigration that our country has ever known and, proportionately, is probably the biggest wave of immigration since the Norman conquest. My contention is that our country simply cannot cope with immigration on that scale-to coin a phrase, we simply cannot go on like this. It is about time that mainstream politicians started airing the views of their constituents, because for too long people have muttered under their breath that they are concerned about immigration. They have been frightened to speak out about it because they are frightened of being accused of being racist. My contention is that immigration is not a racist issue; it is a question of numbers. I personally could not care tuppence about the ethnicity of the immigrants concerned, the colour of their skin or the language that they speak. What I am concerned about is the very large numbers of new arrivals to our country. My contention is that the United Kingdom simply cannot cope with them.

#> 6: I thank Mr. Speaker for giving me permission to hold this debate today. I welcome the Minister-I very much appreciate the contact from his office prior to today-and the Conservative and Liberal Democrat Front Benchers to the debate. I also welcome my hon. Friends on the Back Benches. Immigration is the most important issue for my constituents. I get more complaints, comments and suggestions about immigration than about anything else. In the Kettering constituency, the number of immigrants is actually very low. There is a well-settled Sikh community in the middle of Kettering town itself, which has been in Kettering for some 40 or 50 years and is very much part of the local community and of the fabric of local life. There are other very small migrant groups in my constituency, but it is predominantly made up of indigenous British people. However, there is huge concern among my constituents about the level of immigration into our country. I believe that I am right in saying that, in recent years, net immigration into the United Kingdom is the largest wave of immigration that our country has ever known and, proportionately, is probably the biggest wave of immigration since the Norman conquest. My contention is that our country simply cannot cope with immigration on that scale-to coin a phrase, we simply cannot go on like this. It is about time that mainstream politicians started airing the views of their constituents, because for too long people have muttered under their breath that they are concerned about immigration. They have been frightened to speak out about it because they are frightened of being accused of being racist. My contention is that immigration is not a racist issue; it is a question of numbers. I personally could not care tuppence about the ethnicity of the immigrants concerned, the colour of their skin or the language that they speak. What I am concerned about is the very large numbers of new arrivals to our country. My contention is that the United Kingdom simply cannot cope with them.

#> 7: I thank Mr. Speaker for giving me permission to hold this debate today. I welcome the Minister-I very much appreciate the contact from his office prior to today-and the Conservative and Liberal Democrat Front Benchers to the debate. I also welcome my hon. Friends on the Back Benches. Immigration is the most important issue for my constituents. I get more complaints, comments and suggestions about immigration than about anything else. In the Kettering constituency, the number of immigrants is actually very low. There is a well-settled Sikh community in the middle of Kettering town itself, which has been in Kettering for some 40 or 50 years and is very much part of the local community and of the fabric of local life. There are other very small migrant groups in my constituency, but it is predominantly made up of indigenous British people. However, there is huge concern among my constituents about the level of immigration into our country. I believe that I am right in saying that, in recent years, net immigration into the United Kingdom is the largest wave of immigration that our country has ever known and, proportionately, is probably the biggest wave of immigration since the Norman conquest. My contention is that our country simply cannot cope with immigration on that scale-to coin a phrase, we simply cannot go on like this. It is about time that mainstream politicians started airing the views of their constituents, because for too long people have muttered under their breath that they are concerned about immigration. They have been frightened to speak out about it because they are frightened of being accused of being racist. My contention is that immigration is not a racist issue; it is a question of numbers. I personally could not care tuppence about the ethnicity of the immigrants concerned, the colour of their skin or the language that they speak. What I am concerned about is the very large numbers of new arrivals to our country. My contention is that the United Kingdom simply cannot cope with them.

#> 8: I thank Mr. Speaker for giving me permission to hold this debate today. I welcome the Minister-I very much appreciate the contact from his office prior to today-and the Conservative and Liberal Democrat Front Benchers to the debate. I also welcome my hon. Friends on the Back Benches. Immigration is the most important issue for my constituents. I get more complaints, comments and suggestions about immigration than about anything else. In the Kettering constituency, the number of immigrants is actually very low. There is a well-settled Sikh community in the middle of Kettering town itself, which has been in Kettering for some 40 or 50 years and is very much part of the local community and of the fabric of local life. There are other very small migrant groups in my constituency, but it is predominantly made up of indigenous British people. However, there is huge concern among my constituents about the level of immigration into our country. I believe that I am right in saying that, in recent years, net immigration into the United Kingdom is the largest wave of immigration that our country has ever known and, proportionately, is probably the biggest wave of immigration since the Norman conquest. My contention is that our country simply cannot cope with immigration on that scale-to coin a phrase, we simply cannot go on like this. It is about time that mainstream politicians started airing the views of their constituents, because for too long people have muttered under their breath that they are concerned about immigration. They have been frightened to speak out about it because they are frightened of being accused of being racist. My contention is that immigration is not a racist issue; it is a question of numbers. I personally could not care tuppence about the ethnicity of the immigrants concerned, the colour of their skin or the language that they speak. What I am concerned about is the very large numbers of new arrivals to our country. My contention is that the United Kingdom simply cannot cope with them.

#> 9: I thank Mr. Speaker for giving me permission to hold this debate today. I welcome the Minister-I very much appreciate the contact from his office prior to today-and the Conservative and Liberal Democrat Front Benchers to the debate. I also welcome my hon. Friends on the Back Benches. Immigration is the most important issue for my constituents. I get more complaints, comments and suggestions about immigration than about anything else. In the Kettering constituency, the number of immigrants is actually very low. There is a well-settled Sikh community in the middle of Kettering town itself, which has been in Kettering for some 40 or 50 years and is very much part of the local community and of the fabric of local life. There are other very small migrant groups in my constituency, but it is predominantly made up of indigenous British people. However, there is huge concern among my constituents about the level of immigration into our country. I believe that I am right in saying that, in recent years, net immigration into the United Kingdom is the largest wave of immigration that our country has ever known and, proportionately, is probably the biggest wave of immigration since the Norman conquest. My contention is that our country simply cannot cope with immigration on that scale-to coin a phrase, we simply cannot go on like this. It is about time that mainstream politicians started airing the views of their constituents, because for too long people have muttered under their breath that they are concerned about immigration. They have been frightened to speak out about it because they are frightened of being accused of being racist. My contention is that immigration is not a racist issue; it is a question of numbers. I personally could not care tuppence about the ethnicity of the immigrants concerned, the colour of their skin or the language that they speak. What I am concerned about is the very large numbers of new arrivals to our country. My contention is that the United Kingdom simply cannot cope with them.

#> 10: I thank Mr. Speaker for giving me permission to hold this debate today. I welcome the Minister-I very much appreciate the contact from his office prior to today-and the Conservative and Liberal Democrat Front Benchers to the debate. I also welcome my hon. Friends on the Back Benches. Immigration is the most important issue for my constituents. I get more complaints, comments and suggestions about immigration than about anything else. In the Kettering constituency, the number of immigrants is actually very low. There is a well-settled Sikh community in the middle of Kettering town itself, which has been in Kettering for some 40 or 50 years and is very much part of the local community and of the fabric of local life. There are other very small migrant groups in my constituency, but it is predominantly made up of indigenous British people. However, there is huge concern among my constituents about the level of immigration into our country. I believe that I am right in saying that, in recent years, net immigration into the United Kingdom is the largest wave of immigration that our country has ever known and, proportionately, is probably the biggest wave of immigration since the Norman conquest. My contention is that our country simply cannot cope with immigration on that scale-to coin a phrase, we simply cannot go on like this. It is about time that mainstream politicians started airing the views of their constituents, because for too long people have muttered under their breath that they are concerned about immigration. They have been frightened to speak out about it because they are frightened of being accused of being racist. My contention is that immigration is not a racist issue; it is a question of numbers. I personally could not care tuppence about the ethnicity of the immigrants concerned, the colour of their skin or the language that they speak. What I am concerned about is the very large numbers of new arrivals to our country. My contention is that the United Kingdom simply cannot cope with them.

#> token tag score

#> <char> <char> <num>

#> 1: I PRP 1.0000

#> 2: thank VBP 0.9992

#> 3: Mr. NNP 1.0000

#> 4: Speaker NNP 1.0000

#> 5: for IN 1.0000

#> 6: giving VBG 1.0000

#> 7: me PRP 1.0000

#> 8: permission NN 0.9999

#> 9: to TO 0.9999

#> 10: hold VB 1.0000Tagging Entities Across Full DataFrame

This section focuses on performing Named Entity Recognition (NER) on data stored in a dataframe format. My goal is to identify and tag named entities within text that is organized in a structured dataframe.

I load the flaiR package and use the built-in uk_immigration dataset. For demonstration purposes, I’m only taking the first two rows. This dataset contains discussions about immigration in the UK.

Load the pre-trained model ner. For more pre-trained

models, see https://flairnlp.github.io/docs/tutorial-basics/tagging-entities.

Next, I load the latest model hosted and maintained on Hugging Face by the Flair NLP team. For more Flair NER models, you can visit the official Flair NLP page on Hugging Face (https://huggingface.co/flair).

# Load model without displaying tags

# tagger <- load_tagger_ner("flair/ner-english-large", show_tags = FALSE)

library(flaiR)

tagger_ner <- load_tagger_ner("flair/ner-english-ontonotes")

#> 2025-10-29 01:22:01,578 SequenceTagger predicts: Dictionary with 75 tags: O, S-PERSON, B-PERSON, E-PERSON, I-PERSON, S-GPE, B-GPE, E-GPE, I-GPE, S-ORG, B-ORG, E-ORG, I-ORG, S-DATE, B-DATE, E-DATE, I-DATE, S-CARDINAL, B-CARDINAL, E-CARDINAL, I-CARDINAL, S-NORP, B-NORP, E-NORP, I-NORP, S-MONEY, B-MONEY, E-MONEY, I-MONEY, S-PERCENT, B-PERCENT, E-PERCENT, I-PERCENT, S-ORDINAL, B-ORDINAL, E-ORDINAL, I-ORDINAL, S-LOC, B-LOC, E-LOC, I-LOC, S-TIME, B-TIME, E-TIME, I-TIME, S-WORK_OF_ART, B-WORK_OF_ART, E-WORK_OF_ART, I-WORK_OF_ART, S-FAC

#>

#> NER Tagger Dictionary:

#> ========================================

#> Total tags: 75

#> Model: flair/ner-english-ontonotes

#> ----------------------------------------

#> Special : O, <START>, <STOP>

#> Person : S-PERSON, B-PERSON, E-PERSON, I-PERSON

#> Organization : S-ORG, B-ORG, E-ORG, I-ORG

#> Location : S-GPE, B-GPE, E-GPE, I-GPE, S-LOC, B-LOC, E-LOC, I-LOC

#> Time : S-DATE, B-DATE, E-DATE, I-DATE, S-TIME, B-TIME, E-TIME, I-TIME

#> Numbers : S-CARDINAL, B-CARDINAL, E-CARDINAL, I-CARDINAL, S-MONEY, B-MONEY, E-MONEY, I-MONEY, S-PERCENT, B-PERCENT, E-PERCENT, I-PERCENT, S-ORDINAL, B-ORDINAL, E-ORDINAL, I-ORDINAL

#> Groups : S-NORP, B-NORP, E-NORP, I-NORP

#> Facilities : S-FAC, B-FAC, E-FAC, I-FAC

#> Products : S-PRODUCT, B-PRODUCT, E-PRODUCT, I-PRODUCT

#> Events : S-EVENT, B-EVENT, E-EVENT, I-EVENT

#> Art : S-WORK_OF_ART, B-WORK_OF_ART, E-WORK_OF_ART, I-WORK_OF_ART

#> Languages : S-LANGUAGE, B-LANGUAGE, E-LANGUAGE, I-LANGUAGE

#> Laws : S-LAW, B-LAW, E-LAW, I-LAW

#> ----------------------------------------

#> Tag scheme: BIOES

#> B-: Beginning of multi-token entity

#> I-: Inside of multi-token entity

#> O: Outside (not part of any entity)

#> E-: End of multi-token entity

#> S-: Single token entity

#> ========================================I load a pre-trained NER model. Since I’m using a Mac M1/M2, I set the model to run on the MPS device for faster processing. If I want to use other pre-trained models, I can check the Flair documentation website for available options.

Now I’m ready to process the text:

results <- get_entities(texts = uk_immigration$text,

doc_ids = uk_immigration$speaker,

tagger = tagger_ner,

batch_size = 2,

verbose = FALSE)

#> CPU is used.

head(results, n = 10)

#> doc_id entity tag score

#> <char> <char> <char> <num>

#> 1: Philip Hollobone today DATE 0.9843613

#> 2: Philip Hollobone Conservative NORP 0.9976857

#> 3: Philip Hollobone Liberal Democrat Front Benchers ORG 0.7668477

#> 4: Philip Hollobone Kettering GPE 0.9885774

#> 5: Philip Hollobone Sikh NORP 0.9939976

#> 6: Philip Hollobone Kettering GPE 0.9955219

#> 7: Philip Hollobone Kettering GPE 0.9948049

#> 8: Philip Hollobone some 40 or 50 years DATE 0.8059650

#> 9: Philip Hollobone British NORP 0.9986913

#> 10: Philip Hollobone recent years DATE 0.8596769

Highlight Entities with Colors

This tutorial demonstrates how to use the flaiR package to identify and highlight named entities (such as names, locations, organizations) in text.

Step 1 Create Text with Named Entities

First, we load the flaiR package and work with a sample text:

library(flaiR)

data("uk_immigration")

uk_immigration <- uk_immigration[30,]

tagger_ner <- load_tagger_ner("flair/ner-english-fast")

#> 2025-10-29 01:22:09,213 SequenceTagger predicts: Dictionary with 20 tags: <unk>, O, S-ORG, S-MISC, B-PER, E-PER, S-LOC, B-ORG, E-ORG, I-PER, S-PER, B-MISC, I-MISC, E-MISC, I-ORG, B-LOC, E-LOC, I-LOC, <START>, <STOP>

#>

#> NER Tagger Dictionary:

#> ========================================

#> Total tags: 20

#> Model: flair/ner-english-fast

#> ----------------------------------------

#> Special : <unk>, O, <START>, <STOP>

#> Organization : S-ORG, B-ORG, E-ORG, I-ORG

#> Location : S-LOC, B-LOC, E-LOC, I-LOC

#> Misc : S-MISC, B-MISC, I-MISC, E-MISC

#> ----------------------------------------

#> Tag scheme: BIOES

#> B-: Beginning of multi-token entity

#> I-: Inside of multi-token entity

#> O: Outside (not part of any entity)

#> E-: End of multi-token entity

#> S-: Single token entity

#> ========================================

result <- get_entities(uk_immigration$text,

tagger = tagger_ner,

show.text_id = FALSE

)

#> CPU is used.

#> Warning in check_texts_and_ids(texts, doc_ids): doc_ids is NULL.

#> Auto-assigning doc_ids.Step 2 Highlight the Named Entities

Use the highlight_text function to color-code the

identified entities:

highlighted_text <- highlight_text(text = uk_immigration$text,

entities_mapping = map_entities(result))

highlighted_textExplanation:

-

load_tagger_ner("ner")loads the pre-trained NER model -

get_entities()identifies named entities in the text -

map_entities()maps the identified entities to colors -

highlight_text()marks the original text using these colors

Each type of entity (such as person names, locations, organization names) will be displayed in a different color, making the named entities in the text immediately visible.

The Overview of Embedding

All word embedding classes inherit from the

TokenEmbeddings class and call the embed()

method to embed the text. In most cases when using Flair, various and

complex embedding processes are hidden behind the interface. Users

simply need to instantiate the necessary embedding class and call

embed() to embed text.

Here are the types of embeddings currently supported in FlairNLP:

| Class | Type | Paper |

|---|---|---|

BytePairEmbeddings |

Subword-level word embeddings | Heinzerling and Strube (2018) |

CharacterEmbeddings |

Task-trained character-level embeddings of words | Lample et al. (2016) |

ELMoEmbeddings |

Contextualized word-level embeddings | Peters et al. (2018) |

FastTextEmbeddings |

Word embeddings with subword features | Bojanowski et al. (2017) |

FlairEmbeddings |

Contextualized character-level embeddings | Akbik et al. (2018) |

OneHotEmbeddings |

Standard one-hot embeddings of text or tags | - |

PooledFlairEmbeddings |

Pooled variant of FlairEmbeddings

|

Akbik et al. (2019) |

TransformerWordEmbeddings) |

Embeddings from pretrained transformers (BERT, XLM, GPT, RoBERTa, XLNet, DistilBERT etc.) | Devlin et al. (2018) Radford et al. (2018) Liu et al. (2019) Dai et al. (2019) Yang et al. (2019) Lample and Conneau (2019) |

WordEmbeddings |

Classic word embeddings |

Byte Pair Embeddings

Please note that ihis document for R is a conversion of the Flair NLP document implemented in Python.

BytePairEmbeddings are word embeddings that operate at

the subword level. They can embed any word by breaking it down into

subwords and looking up their corresponding embeddings. This technique

was introduced by Heinzerling and Strube

(2018) , who demonstrated that BytePairEmbeddings achieve comparable

accuracy to traditional word embeddings while requiring only a fraction

of the model size. This makes them an excellent choice for training

compact models.

To initialize BytePairEmbeddings, you need to specify:

- A language code (275 languages supported)

- Number of syllables

- Number of dimensions (options: 50, 100, 200, or 300)

library(flaiR)

# Initialize embedding

BytePairEmbeddings <- flair_embeddings()$BytePairEmbeddings

# Create BytePairEmbeddings with specified parameters

embedding <- BytePairEmbeddings(

language = "en", # Language code (e.g., "en" for English)

dim = 50L, # Embedding dimensions: options are 50L, 100L, 200L, or 300L

syllables = 100000L # Subword vocabulary size

)

# Create a sample sentence

Sentence <- flair_data()$Sentence

sentence = Sentence('The grass is green .')

# Embed words in the sentence

embedding$embed(sentence)

#> [[1]]

#> Sentence[5]: "The grass is green ."

# Print embeddings

for (i in 1:length(sentence$tokens)) {

token <- sentence$tokens[[i]]

cat("\nWord:", token$text, "\n")

# Convert embedding to R vector and print

# Python index starts from 0, so use i-1

embedding_vector <- sentence[i-1]$embedding$numpy()

cat("Embedding shape:", length(embedding_vector), "\n")

cat("First 5 values:", head(embedding_vector, 5), "\n")

cat("-------------------\n")

}

#>

#> Word: The

#> Embedding shape: 100

#> First 5 values: -0.585645 0.55233 -0.335385 -0.117119 -0.3433

#> -------------------

#>

#> Word: grass

#> Embedding shape: 100

#> First 5 values: 0.370427 -0.717806 -0.489089 0.384228 0.68443

#> -------------------

#>

#> Word: is

#> Embedding shape: 100

#> First 5 values: -0.186592 0.52804 -1.011618 0.416936 -0.166446

#> -------------------

#>

#> Word: green

#> Embedding shape: 100

#> First 5 values: -0.075467 -0.874228 0.20425 1.061623 -0.246111

#> -------------------

#>

#> Word: .

#> Embedding shape: 100

#> First 5 values: -0.214652 0.212236 -0.607079 0.512853 -0.325556

#> -------------------More information can be found on the byte pair embeddings web page.

BytePairEmbeddings also have a multilingual model capable

of embedding any word in any language. You can instantiate it with:

embedding <- BytePairEmbeddings('multi')You can also load custom BytePairEmbeddings by

specifying a path to model_file_path and embedding_file_path arguments.

They correspond respectively to a SentencePiece model file

and to an embedding file (Word2Vec plain text or GenSim binary).

Flair Embeddings

The following example manual is translated into R from Flair NLP by Zalando Research. In Flair, the use of embedding is very quite straightforward. Here’s an example code snippet of how to use Flair’s contextual string embeddings:

library(flaiR)

FlairEmbeddings <- flair_embeddings()$FlairEmbeddings

# init embedding

flair_embedding_forward <- FlairEmbeddings('news-forward')

# create a sentence

Sentence <- flair_data()$Sentence

sentence = Sentence('The grass is green .')

# embed words in sentence

flair_embedding_forward$embed(sentence)

#> [[1]]

#> Sentence[5]: "The grass is green ."| ID | Language | Embedding |

|---|---|---|

| ‘multi-X’ | 300+ | JW300 corpus, as proposed by Agić and Vulić (2019). The corpus is licensed under CC-BY-NC-SA |

| ‘multi-X-fast’ | English, German, French, Italian, Dutch, Polish | Mix of corpora (Web, Wikipedia, Subtitles, News), CPU-friendly |

| ‘news-X’ | English | Trained with 1 billion word corpus |

| ‘news-X-fast’ | English | Trained with 1 billion word corpus, CPU-friendly |

| ‘mix-X’ | English | Trained with mixed corpus (Web, Wikipedia, Subtitles) |

| ‘ar-X’ | Arabic | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘bg-X’ | Bulgarian | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘bg-X-fast’ | Bulgarian | Added by @stefan-it: Trained with various sources (Europarl, Wikipedia or SETimes) |

| ‘cs-X’ | Czech | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘cs-v0-X’ | Czech | Added by @stefan-it: LM embeddings (earlier version) |

| ‘de-X’ | German | Trained with mixed corpus (Web, Wikipedia, Subtitles) |

| ‘de-historic-ha-X’ | German (historical) | Added by @stefan-it: Historical German trained over Hamburger Anzeiger |

| ‘de-historic-wz-X’ | German (historical) | Added by @stefan-it: Historical German trained over Wiener Zeitung |

| ‘de-historic-rw-X’ | German (historical) | Added by @redewiedergabe: Historical German trained over 100 million tokens |

| ‘es-X’ | Spanish | Added by @iamyihwa: Trained with Wikipedia |

| ‘es-X-fast’ | Spanish | Added by @iamyihwa: Trained with Wikipedia, CPU-friendly |

| ‘es-clinical-’ | Spanish (clinical) | Added by @matirojasg: Trained with Wikipedia |

| ‘eu-X’ | Basque | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘eu-v0-X’ | Basque | Added by @stefan-it: LM embeddings (earlier version) |

| ‘fa-X’ | Persian | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘fi-X’ | Finnish | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘fr-X’ | French | Added by @mhham: Trained with French Wikipedia |

| ‘he-X’ | Hebrew | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘hi-X’ | Hindi | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘hr-X’ | Croatian | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘id-X’ | Indonesian | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘it-X’ | Italian | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘ja-X’ | Japanese | Added by @frtacoa: Trained with 439M words of Japanese Web crawls (2048 hidden states, 2 layers) |

| ‘nl-X’ | Dutch | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘nl-v0-X’ | Dutch | Added by @stefan-it: LM embeddings (earlier version) |

| ‘no-X’ | Norwegian | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘pl-X’ | Polish | Added by @borchmann: Trained with web crawls (Polish part of CommonCrawl) |

| ‘pl-opus-X’ | Polish | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘pt-X’ | Portuguese | Added by @ericlief: LM embeddings |

| ‘sl-X’ | Slovenian | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘sl-v0-X’ | Slovenian | Added by @stefan-it: Trained with various sources (Europarl, Wikipedia and OpenSubtitles2018) |

| ‘sv-X’ | Swedish | Added by @stefan-it: Trained with Wikipedia/OPUS |

| ‘sv-v0-X’ | Swedish | Added by @stefan-it: Trained with various sources (Europarl, Wikipedia or OpenSubtitles2018) |

| ‘ta-X’ | Tamil | Added by @stefan-it |

| ‘pubmed-X’ | English | Added by @jessepeng: Trained with 5% of PubMed abstracts until 2015 (1150 hidden states, 3 layers) |

| ‘de-impresso-hipe-v1-X’ | German (historical) | In-domain data (Swiss and Luxembourgish newspapers) for CLEF HIPE Shared task. More information on the shared task can be found in this paper |

| ‘en-impresso-hipe-v1-X’ | English (historical) | In-domain data (Chronicling America material) for CLEF HIPE Shared task. More information on the shared task can be found in this paper |

| ‘fr-impresso-hipe-v1-X’ | French (historical) | In-domain data (Swiss and Luxembourgish newspapers) for CLEF HIPE Shared task. More information on the shared task can be found in this paper |

| ‘am-X’ | Amharic | Based on 6.5m Amharic text corpus crawled from different sources. See this paper and the official GitHub Repository for more information. |

| ‘uk-X’ | Ukrainian | Added by @dchaplinsky: Trained with UberText corpus. |

So, if you want to load embeddings from the German forward LM model, instantiate the method as follows:

flair_de_forward <- FlairEmbeddings('de-forward')And if you want to load embeddings from the Bulgarian backward LM model, instantiate the method as follows:

flair_bg_backward <- FlairEmbeddings('bg-backward')

Recommended Flair Usage in flaiR in R

We recommend combining both forward and backward Flair embeddings.

Depending on the task, we also recommend adding standard word embeddings

into the mix. So, our recommended StackedEmbedding for most

English tasks is:

FlairEmbeddings <- flair_embeddings()$FlairEmbeddings

WordEmbeddings <- flair_embeddings()$WordEmbeddings

StackedEmbeddings <- flair_embeddings()$StackedEmbeddings

# create a StackedEmbedding object that combines glove and forward/backward flair embeddings

stacked_embeddings <- StackedEmbeddings(list(WordEmbeddings("glove"),

FlairEmbeddings("news-forward"),

FlairEmbeddings("news-backward")))That’s it! Now just use this embedding like all the other embeddings,

i.e. call the embed() method over your sentences.

# create a sentence

Sentence <- flair_data()$Sentence

sentence = Sentence('The grass is green .')

# just embed a sentence using the StackedEmbedding as you would with any single embedding.

stacked_embeddings$embed(sentence)

# now check out the embedded tokens.

# Note that Python is indexing from 0. In an R for loop, using seq_along(sentence) - 1 achieves the same effect.

for (i in seq_along(sentence)-1) {

print(sentence[i])

print(sentence[i]$embedding)

}

#> Token[0]: "The"

#> tensor([-0.0382, -0.2449, 0.7281, ..., -0.0065, -0.0053, 0.0090])

#> Token[1]: "grass"

#> tensor([-0.8135, 0.9404, -0.2405, ..., 0.0354, -0.0255, -0.0143])

#> Token[2]: "is"

#> tensor([-5.4264e-01, 4.1476e-01, 1.0322e+00, ..., -5.3691e-04,

#> -9.6750e-03, -2.7541e-02])

#> Token[3]: "green"

#> tensor([-0.6791, 0.3491, -0.2398, ..., -0.0007, -0.1333, 0.0161])

#> Token[4]: "."

#> tensor([-0.3398, 0.2094, 0.4635, ..., 0.0005, -0.0177, 0.0032])Words are now embedded using a concatenation of three different embeddings. This combination often gives state-of-the-art accuracy.

Pooled Flair Embeddings

We also developed a pooled variant of the

FlairEmbeddings. These embeddings differ in that they

constantly evolve over time, even at prediction time

(i.e. after training is complete). This means that the same words in the

same sentence at two different points in time may have different

embeddings.

PooledFlairEmbeddings manage a ‘global’ representation

of each distinct word by using a pooling operation of all past

occurences. More details on how this works may be found in Akbik et

al. (2019).

You can instantiate and use PooledFlairEmbeddings like

any other embedding:

# initiate embedding from Flair NLP

PooledFlairEmbeddings <- flair_embeddings()$PooledFlairEmbeddings

flair_embedding_forward <- PooledFlairEmbeddings('news-forward')

# create a sentence object

sentence <- Sentence('The grass is green .')

# embed words in sentence

flair_embedding_forward$embed(sentence)

#> [[1]]

#> Sentence[5]: "The grass is green ."Note that while we get some of our best results with

PooledFlairEmbeddings they are very ineffective memory-wise

since they keep past embeddings of all words in memory. In many cases,

regular FlairEmbeddings will be nearly as good but with

much lower memory requirements.

Transformer Embeddings

Please note that content and examples in this section have been

extensively revised from the TransformerWordEmbeddings

official documentation. Flair supports various Transformer-based

architectures like BERT or XLNet from HuggingFace, with two classes

TransformerWordEmbeddings

(to embed words or tokens) and TransformerDocumentEmbeddings

(to embed documents).

Embeddings Words with Transformers

For instance, to load a standard BERT transformer model, do:

library(flaiR)

# initiate embedding and load BERT model from HugginFaces

TransformerWordEmbeddings <- flair_embeddings()$TransformerWordEmbeddings

embedding <- TransformerWordEmbeddings('bert-base-uncased')

# create a sentence

Sentence <- flair_data()$Sentence

sentence = Sentence('The grass is green .')

# embed words in sentence

embedding$embed(sentence)

#> [[1]]

#> Sentence[5]: "The grass is green ."If instead you want to use RoBERTa, do:

TransformerWordEmbeddings <- flair_embeddings()$TransformerWordEmbeddings

embedding <- TransformerWordEmbeddings('roberta-base')

sentence <- Sentence('The grass is green .')

embedding$embed(sentence)

#> [[1]]

#> Sentence[5]: "The grass is green ."{flaiR} interacts with Flair NLP (Zalando Research),

allowing you to use pre-trained models from HuggingFace , where you

can search for models to use.

Embedding Documents with Transformers

To embed a whole sentence as one (instead of each word in the

sentence), simply use the TransformerDocumentEmbeddings

instead:

TransformerDocumentEmbeddings <- flair_embeddings()$TransformerDocumentEmbeddings

embedding <- TransformerDocumentEmbeddings('roberta-base')

sentence <- Sentence('The grass is green.')

embedding$embed(sentence)

#> [[1]]

#> Sentence[5]: "The grass is green."Arguments

There are several options that you can set when you init the TransformerWordEmbeddings

and TransformerDocumentEmbeddings

classes:

| Argument | Default | Description |

|---|---|---|

model |

bert-base-uncased |

The string identifier of the transformer model you want to use (see above) |

layers |

all |

Defines the layers of the Transformer-based model that produce the embedding |

subtoken_pooling |

first |

See Pooling operation section. |

layer_mean |

True |

See Layer mean section. |

fine_tune |

False |

Whether or not embeddings are fine-tuneable. |

allow_long_sentences |

True |

Whether or not texts longer than maximal sequence length are supported. |

use_context |

False |

Set to True to include context outside of sentences. This can greatly increase accuracy on some tasks, but slows down embedding generation. |

Layers

The layers argument controls which transformer layers

are used for the embedding. If you set this value to ‘-1,-2,-3,-4’, the

top 4 layers are used to make an embedding. If you set it to ‘-1’, only

the last layer is used. If you set it to “all”, then all layers are

used. This affects the length of an embedding, since layers are just

concatenated.

Sentence <- flair_data()$Sentence

TransformerWordEmbeddings <- flair_embeddings()$TransformerWordEmbeddings

sentence = Sentence('The grass is green.')

# use only last layers

embeddings <- TransformerWordEmbeddings('bert-base-uncased', layers='-1', layer_mean = FALSE)

embeddings$embed(sentence)

#> [[1]]

#> Sentence[5]: "The grass is green."

print(sentence[0]$embedding$size())

#> torch.Size([768])

sentence$clear_embeddings()

sentence <- Sentence('The grass is green.')

# use only last layers

embeddings <- TransformerWordEmbeddings('bert-base-uncased', layers = "-1", layer_mean = FALSE)

embeddings$embed(sentence)

#> [[1]]

#> Sentence[5]: "The grass is green."

print(sentence[0]$embedding$size())

#> torch.Size([768])

sentence$clear_embeddings()

# use last two layers

embeddings <- TransformerWordEmbeddings('bert-base-uncased', layers='-1,-2', layer_mean = FALSE)

embeddings$embed(sentence)

#> [[1]]

#> Sentence[5]: "The grass is green."

print(sentence[0]$embedding$size())

#> torch.Size([1536])

sentence$clear_embeddings()

# use ALL layers

embeddings = TransformerWordEmbeddings('bert-base-uncased', layers='all', layer_mean=FALSE)

embeddings$embed(sentence)

#> [[1]]

#> Sentence[5]: "The grass is green."

print(sentence[0]$embedding$size())

#> torch.Size([9984])Here’s an example of how it might be done:

You can directly import torch from reticulate since it has already been installed through the flair dependency when you installed flair in Python.

# You can directly import torch from reticulate since it has already been installed through the flair dependency when you installed flair in Python.

torch <- reticulate::import('torch')

# Attempting to create a tensor with integer dimensions

torch$Size(list(768L))

#> torch.Size([768])

torch$Size(list(1536L))

#> torch.Size([1536])

torch$Size(list(9984L))

#> torch.Size([9984])Notice the L after the numbers in the list? This ensures that R treats the numbers as integers. If you’re generating these numbers dynamically (e.g., through computation), you might want to ensure they are integers before attempting to create the tensor. I.e. the size of the embedding increases the mode layers we use (but ONLY if layer_mean is set to False, otherwise the length is always the same).

Pooling Operation

Most of the Transformer-based models use subword tokenization. E.g.

the following token puppeteer could be tokenized into the

subwords: pupp, ##ete and

##er.

We implement different pooling operations for these subwords to generate the final token representation:

-

first: only the embedding of the first subword is used -

last: only the embedding of the last subword is used -

first_last: embeddings of the first and last subwords are concatenated and used -

mean: atorch.meanover all subword embeddings is calculated and used

You can choose which one to use by passing this in the constructor:

# use first and last subtoken for each word

embeddings = TransformerWordEmbeddings('bert-base-uncased', subtoken_pooling='first_last')

embeddings$embed(sentence)

#> [[1]]

#> Sentence[5]: "The grass is green."

print(sentence[0]$embedding$size())

#> torch.Size([9984])Layer Mean

The Transformer-based models have a certain number of layers. By

default, all layers you select are concatenated as explained above.

Alternatively, you can set layer_mean=True to do a mean

over all selected layers. The resulting vector will then always have the

same dimensionality as a single layer:

# initiate embedding from transformer. This model will be downloaded from Flair NLP huggingface.

embeddings <- TransformerWordEmbeddings('bert-base-uncased', layers="all", layer_mean=TRUE)

# create a sentence object

sentence = Sentence("The Oktoberfest is the world's largest Volksfest .")

# embed words in sentence

embedding$embed(sentence)

#> [[1]]

#> Sentence[9]: "The Oktoberfest is the world's largest Volksfest ."Fine-tuneable or Not

Here’s an example of how it might be done: In some setups, you may

wish to fine-tune the transformer embeddings. In this case, set

fine_tune=True in the init method. When fine-tuning, you

should also only use the topmost layer, so best set

layers='-1'.

# use first and last subtoken for each word

TransformerWordEmbeddings <- flair_embeddings()$TransformerWordEmbeddings

embeddings <- TransformerWordEmbeddings('bert-base-uncased', fine_tune=TRUE, layers='-1')

embeddings$embed(sentence)

#> [[1]]

#> Sentence[9]: "The Oktoberfest is the world's largest Volksfest ."This will print a tensor that now has a gradient function and can be fine-tuned if you use it in a training routine.

print(sentence[0]$embedding)

#> tensor([-6.5871e-01, 1.0410e-01, 3.4632e-01, -3.3775e-01, -2.1013e-01,

#> -1.3036e-02, 5.1998e-01, 1.6574e+00, -5.2521e-02, -4.8632e-02,

#> -7.8968e-01, -9.5547e-01, -1.9723e-01, 9.4999e-01, -1.0337e+00,

#> 8.6668e-02, 9.8104e-02, 5.6511e-02, 3.1075e-02, 2.4157e-01,

#> -1.1427e-01, -2.3692e-01, -2.0700e-01, 7.7985e-01, 2.5460e-01,

#> -5.0831e-03, -2.4110e-01, 2.2436e-01, -7.3249e-02, -8.1094e-01,

#> -1.8778e-01, 2.1219e-01, -5.9514e-01, 6.3129e-02, -4.8880e-01,

#> -3.2300e-02, -1.9125e-02, -1.0991e-01, -1.5604e-02, 4.3068e-01,

#> -1.7968e-01, -5.4499e-01, 7.0608e-01, -4.0512e-01, 1.7761e-01,

#> -8.5820e-01, 2.3438e-02, -1.4981e-01, -9.0368e-01, -2.1097e-01,

#> -3.3535e-01, 1.4920e-01, -7.4529e-03, 1.0239e+00, -6.1777e-02,

#> 3.3913e-01, 8.5811e-02, 6.9401e-01, -7.7483e-02, 3.1484e-01,

#> -4.3921e-01, 1.2933e+00, 5.7995e-03, -7.0992e-01, 2.7525e-01,

#> 8.8792e-01, 2.6303e-03, 1.3640e+00, 5.6885e-01, -2.4904e-01,

#> -4.5158e-02, -1.7575e-01, -3.4730e-01, 5.8362e-02, -2.0346e-01,

#> -1.2505e+00, -3.0592e-01, -3.6105e-02, -2.4066e-01, -5.1250e-01,

#> 2.6930e-01, 1.4068e-01, 3.4056e-01, 7.3297e-01, 2.6848e-01,

#> 2.4303e-01, -9.4885e-01, -9.0367e-01, -1.3184e-01, 6.7348e-01,

#> -3.2994e-02, 4.7660e-01, -7.1617e-03, -3.4141e-01, 6.8473e-01,

#> -4.4869e-01, -4.9831e-01, -8.0143e-01, 1.4073e+00, 5.3251e-01,

#> 2.4643e-01, -4.2529e-01, 9.1615e-02, 6.4496e-01, 1.7931e-01,

#> -2.1473e-01, 1.5447e-01, -3.2978e-01, 1.0799e-01, -1.9402e+00,

#> -5.0380e-01, -2.7636e-01, -1.1227e-01, 1.1576e-01, 2.5885e-01,

#> -1.7916e-01, 6.6166e-01, -9.6098e-01, -5.1242e-01, -3.5424e-01,

#> 2.1383e-01, 6.6456e-01, 2.5498e-01, 3.7250e-01, -1.1821e+00,

#> -4.9551e-01, -2.0858e-01, 1.1511e+00, -1.0366e-02, -1.0682e+00,

#> 3.7277e-01, 6.4048e-01, 2.3308e-01, -9.3824e-01, 9.5015e-02,

#> 5.7904e-01, 6.3969e-01, 8.2359e-02, -1.4075e-01, 3.0107e-01,

#> 3.5827e-03, -4.4684e-01, -2.6913e+00, -3.3933e-01, 2.8731e-03,

#> -1.3639e-01, -7.1054e-01, -1.1048e+00, 2.2374e-01, 1.1830e-01,

#> 4.8416e-01, -2.9110e-01, -6.7650e-01, 2.3202e-01, -1.0123e-01,

#> -1.9174e-01, 4.9959e-02, 5.2067e-01, 1.3272e+00, 6.8250e-01,

#> 5.5332e-01, -1.0886e+00, 4.5160e-01, -1.5010e-01, -9.8074e-01,

#> 8.5110e-02, 1.6498e-01, 6.6032e-01, 1.0815e-02, 1.8952e-01,

#> -5.6607e-01, -1.3743e-02, 9.1171e-01, 2.7812e-01, 2.9551e-01,

#> -3.5637e-01, 3.2030e-01, 5.6738e-01, -1.5707e-01, 3.5326e-01,

#> -4.7747e-01, 7.8646e-01, 1.3765e-01, 2.2440e-01, 4.2422e-01,

#> -2.6504e-01, 2.2014e-02, -6.7154e-01, -8.7998e-02, 1.4284e-01,

#> 4.0983e-01, 1.0933e-02, -1.0704e+00, -1.9350e-01, 6.0051e-01,

#> 5.0545e-02, 1.1434e-02, -8.0243e-01, -6.6871e-01, 5.3953e-01,

#> -5.9856e-01, -1.6915e-01, -3.5307e-01, 4.4568e-01, -7.2761e-01,

#> 1.1629e+00, -3.1553e-01, -7.9747e-01, -2.0582e-01, 3.7320e-01,

#> 5.9379e-01, -3.1898e-01, -1.6932e-01, -6.2492e-01, 5.7047e-01,

#> -2.9779e-01, -5.9106e-01, 8.5436e-02, -2.1839e-01, -2.2214e-01,

#> 7.9233e-01, 8.0537e-01, -5.9785e-01, 4.0474e-01, 3.9266e-01,

#> 5.8169e-01, -5.2506e-01, 6.9786e-01, 1.1163e-01, 8.7435e-02,

#> 1.7549e-01, 9.1438e-02, 5.8816e-01, 6.4338e-01, -2.7138e-01,

#> -5.3449e-01, -1.0168e+00, -5.1338e-02, 3.0099e-01, -7.6696e-02,

#> -2.1126e-01, 5.8143e-01, 1.3599e-01, 6.2759e-01, -6.2810e-01,

#> 5.9966e-01, 3.5836e-01, -3.0706e-02, 1.5563e-01, -1.4016e-01,

#> -2.0155e-01, -1.3755e+00, -9.1876e-02, -6.9892e-01, 7.9438e-02,

#> -4.2926e-01, 3.7988e-01, 7.6741e-01, 5.3094e-01, 8.5981e-01,

#> 4.4185e-02, -6.3507e-01, 3.9587e-01, -3.6635e-01, -7.0770e-01,

#> 8.3675e-04, -3.0055e-01, 2.1360e-01, -4.1649e-01, 6.9457e-01,

#> -6.2715e-01, -5.1101e-01, 3.0331e-01, -2.3804e+00, -1.0566e-02,

#> -9.4488e-01, 4.3317e-02, 2.4188e-01, 1.9204e-02, 1.5718e-03,

#> -3.0374e-01, 3.1933e-01, -7.4432e-01, 1.4599e-01, -5.2102e-01,

#> -5.2269e-01, 1.3274e-01, -2.8936e-01, 4.1706e-02, 2.6143e-01,

#> -4.4796e-01, 7.3136e-01, 6.3893e-02, 4.7398e-01, -5.1062e-01,

#> -1.3705e-01, 2.0763e-01, -3.9115e-01, 2.8822e-01, -3.5283e-01,

#> 3.4881e-02, -3.3602e-01, 1.7210e-01, 1.3537e-02, -5.3036e-01,

#> 1.2847e-01, -4.5576e-01, -3.7251e-01, -3.2254e+00, -3.1650e-01,

#> -2.6144e-01, -9.4983e-02, 2.7651e-02, -2.3750e-01, 3.1001e-01,

#> 1.1428e-01, -1.2870e-01, -4.7496e-01, 4.4594e-01, -3.6138e-01,

#> -3.1009e-01, -9.9613e-02, 5.3968e-01, 1.2840e-02, 1.4507e-01,

#> -2.5181e-01, 1.9310e-01, 4.1073e-01, 5.9776e-01, -2.5585e-01,

#> 5.7184e-02, -5.1505e-01, -6.8708e-02, 4.7767e-01, -1.2078e-01,

#> -5.0894e-01, -9.2884e-01, 7.8471e-01, 2.0216e-01, 4.3243e-01,

#> 3.2803e-01, -1.0122e-01, 3.3529e-01, -1.2183e-01, -5.5060e-01,

#> 3.5427e-01, 7.4558e-02, -3.1411e-01, -1.7512e-01, 2.2485e-01,

#> 4.2295e-01, 7.7110e-02, 1.8063e+00, 7.6634e-03, -1.1083e-02,

#> -2.8603e-02, 7.7143e-02, 8.2345e-02, 8.0272e-02, -1.1858e+00,

#> 2.0523e-01, 3.4053e-01, 2.0424e-01, -2.0574e-02, 3.0466e-01,

#> -2.1858e-01, 6.3737e-01, -5.6264e-01, 1.4153e-01, 2.4319e-01,

#> -5.6688e-01, 7.2375e-02, -2.9329e-01, 4.6561e-02, 1.8977e-01,

#> 2.4977e-01, 9.1892e-01, 1.1346e-01, 3.8588e-01, -3.5543e-01,

#> -1.3380e+00, -8.5644e-01, -5.5443e-01, -7.2317e-01, -2.9225e-01,

#> -1.4389e-01, 6.9715e-01, -5.9852e-01, -6.8932e-01, -6.0952e-01,

#> 1.8234e-01, -7.5841e-02, 3.6445e-01, -3.8286e-01, 2.6545e-01,

#> -2.6569e-01, -4.9999e-01, -3.8354e-01, -2.2809e-01, 8.8314e-01,

#> 2.9041e-01, 5.4803e-01, -1.0668e+00, 4.7406e-01, 7.8804e-02,

#> -1.1559e+00, -3.0649e-01, 6.0479e-02, -7.1279e-01, -4.3335e-01,

#> -8.2428e-04, -1.0236e-01, 3.5497e-01, 1.8665e-01, 1.2045e-01,

#> 1.2071e-01, 6.2911e-01, 3.1421e-01, -2.1635e-01, -8.9416e-01,

#> 6.6361e-01, -9.2981e-01, 6.9193e-01, -2.5403e-01, -2.5835e-02,

#> 1.2342e+00, -6.5908e-01, 7.5741e-01, 2.9014e-01, 3.0760e-01,

#> -1.0249e+00, -2.7089e-01, 4.6132e-01, 6.1510e-02, 2.5385e-01,

#> -5.2075e-01, -3.5107e-01, 3.3694e-01, -2.5047e-01, -2.7855e-01,

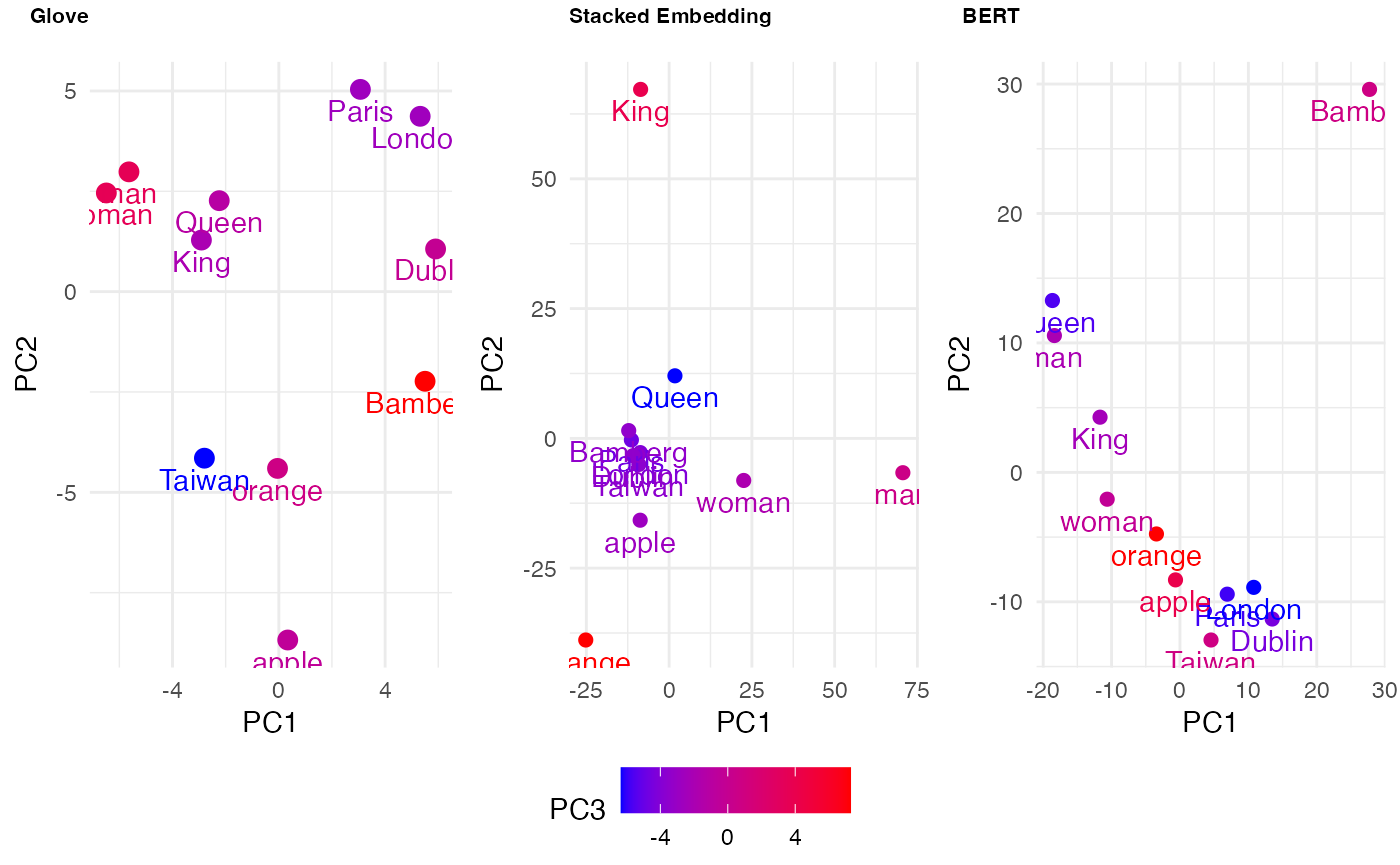

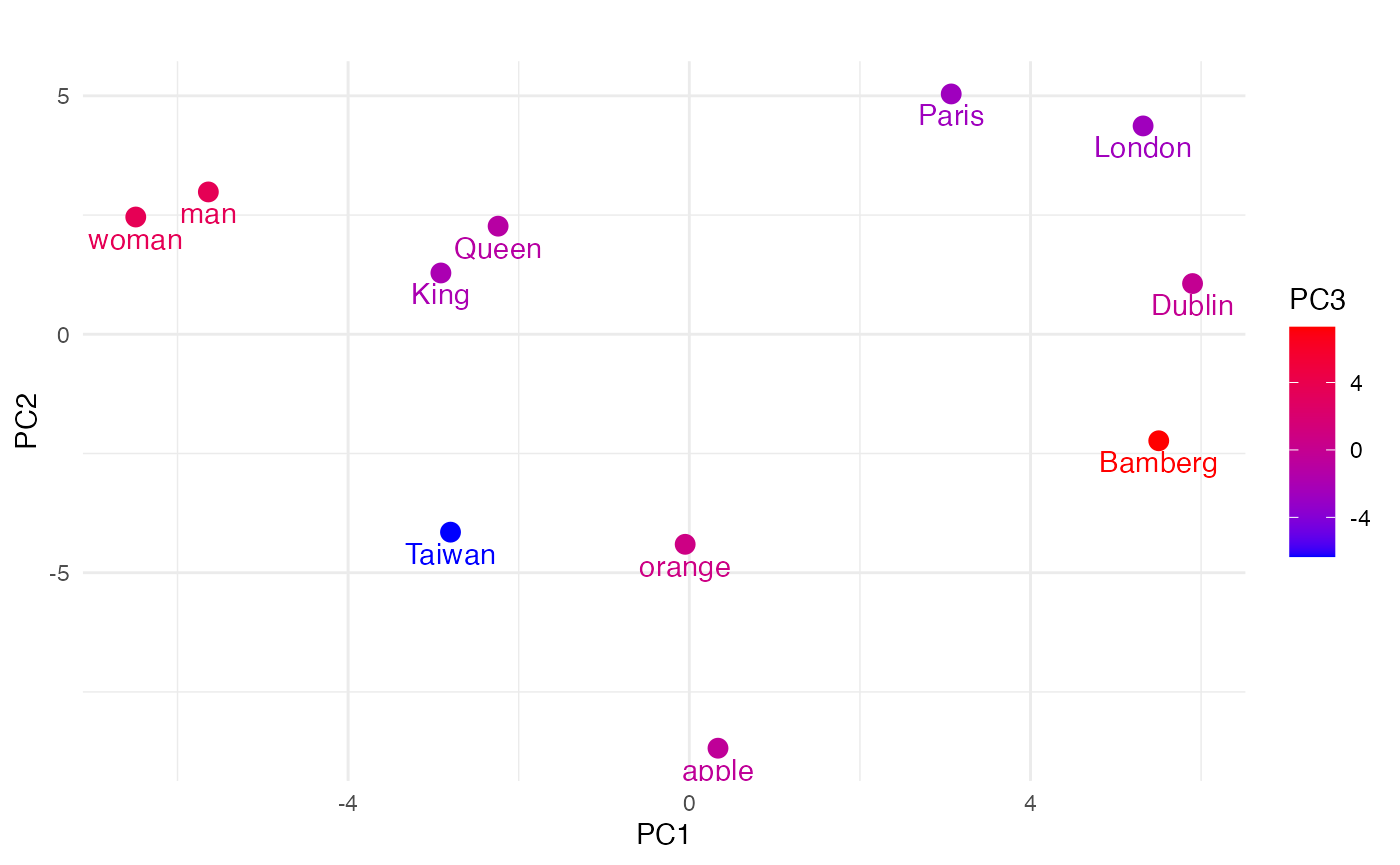

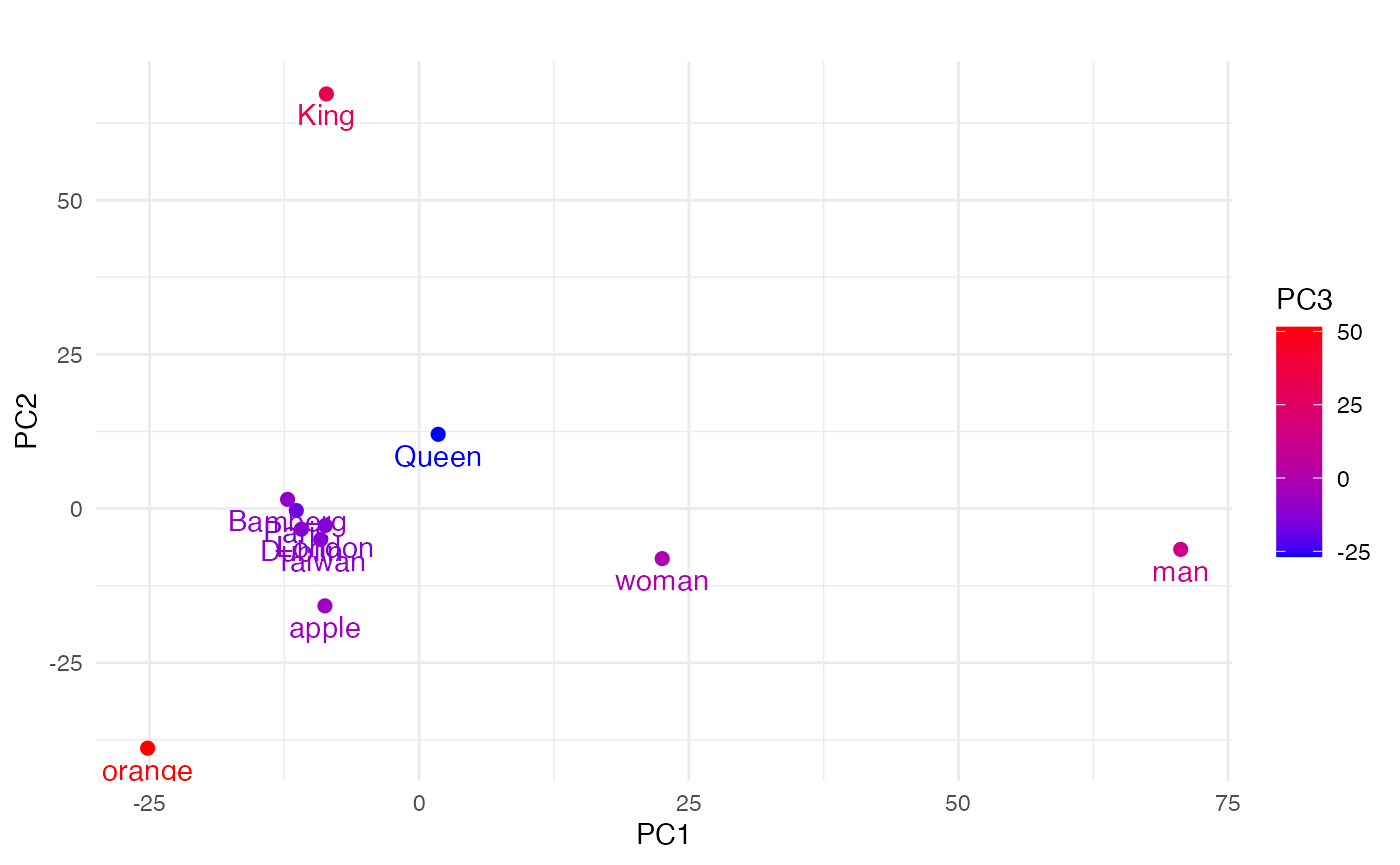

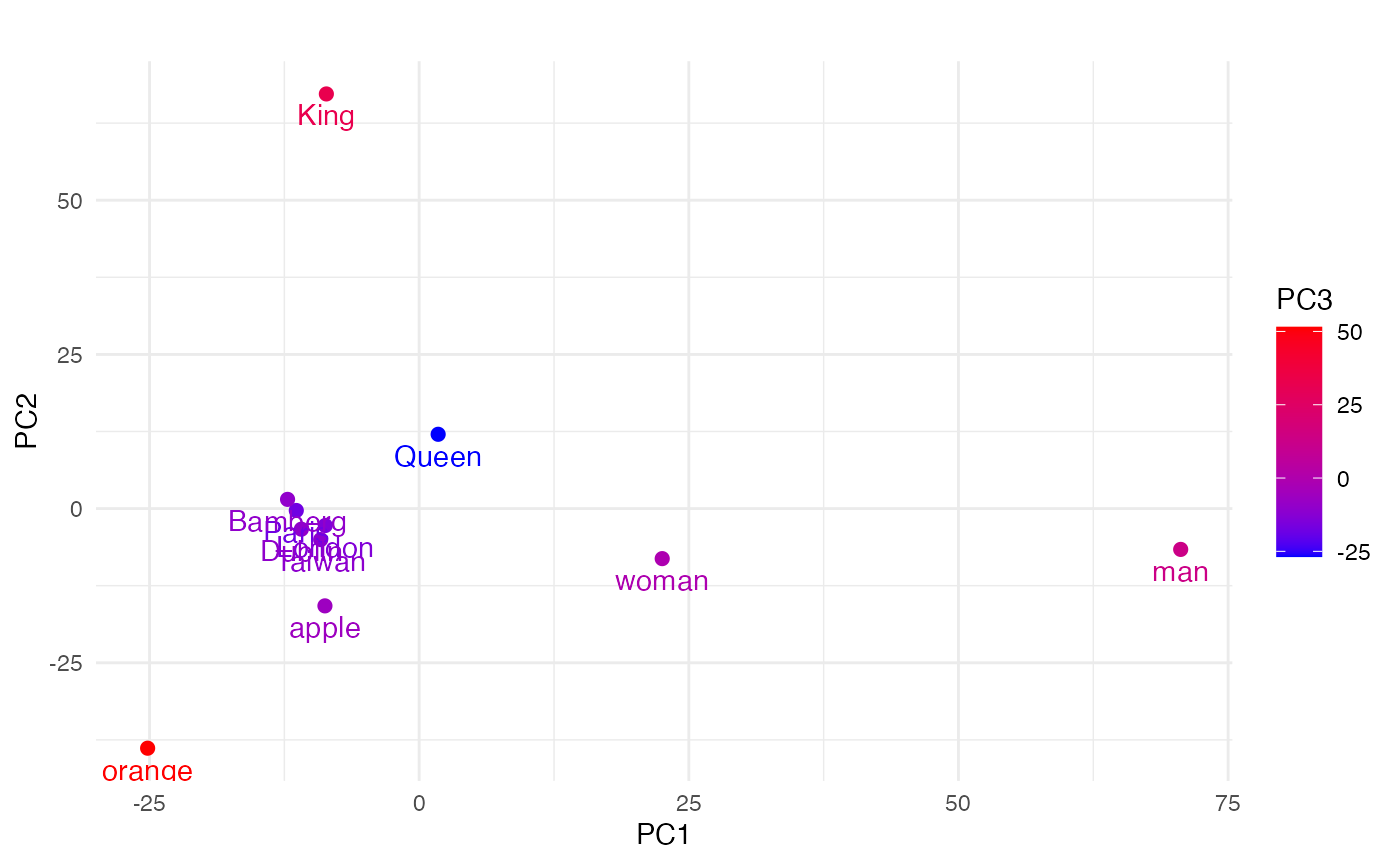

#> 2.0280e-01, -1.5703e-01, 4.1619e-02, 1.4451e-01, -1.6666e-01,